SPISim’s MPro Overview:

Design Concept:

Signal and power integrity analysis for a system involve many design and modeling factors. To predict whether the design will work reliably, one must have a systematic method to create a model with these variables being taken into account. This model can correlate with existing data set, thus proof the model’s validity, and can be used to predict cases and probabilities when the design will fail. SPIMPro (MPro for short) is designed to facilitate this process to create such models and prediction. It’s implemented in a straightforward analysis flow and presented in a simple yet versatile GUI.

Environment:

MPro is a top-level module runs on SPISim’s framework. This means MPro is cross platform, all in one environment and supports further extension via add-ons such as BPro. MPro by itself provides comprehensive, end to end, general modeling capabilities for system analysis.

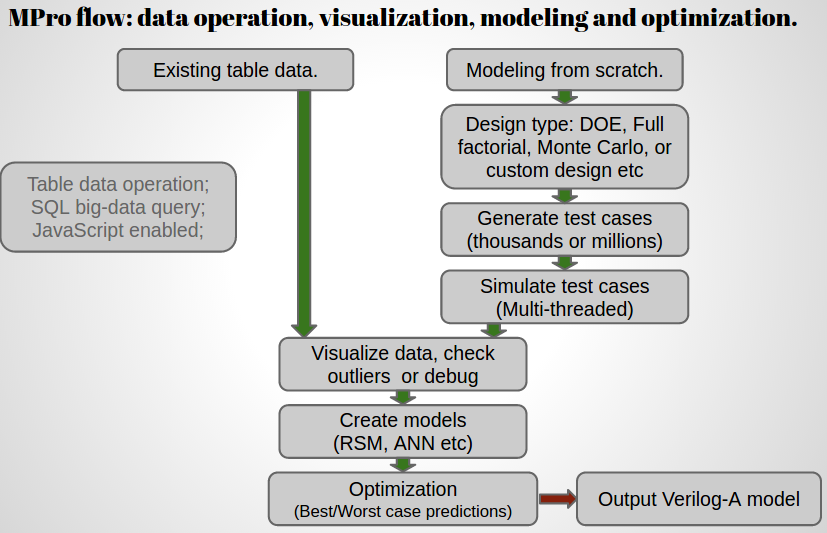

Modeling flow:

Here is the MPro’s general modeling flow:

Flow details:

General speaking, system analysis (e.g. for signal and power integrity) involving the following steps:

- Define variables: Engineer should decide factors which will contribute to the performance parameters. On a system, they can be models used, routing length, layer stackups, signal speeds and different termination schemes. It’s desired to have roughly 12 or fewer variables being considered in a system analysis.

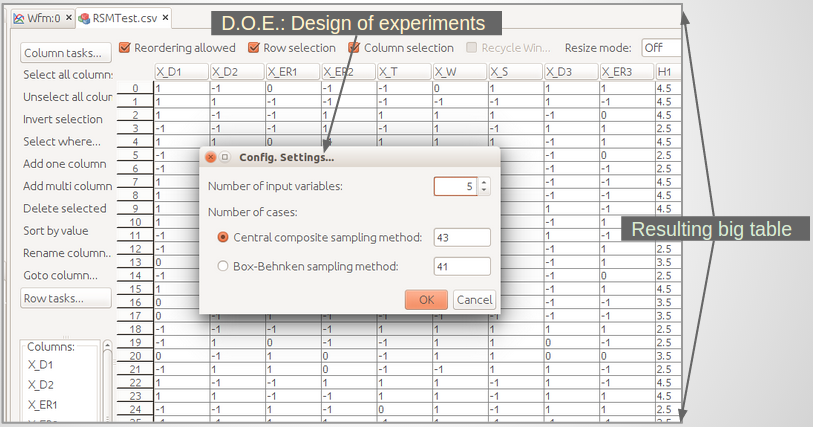

- Create a design: Once variables are defined, one can create a design to evaluate the impact of each variable. There are several frequently used design, such as design of experiments, Monte Carlo or full factorial etc.

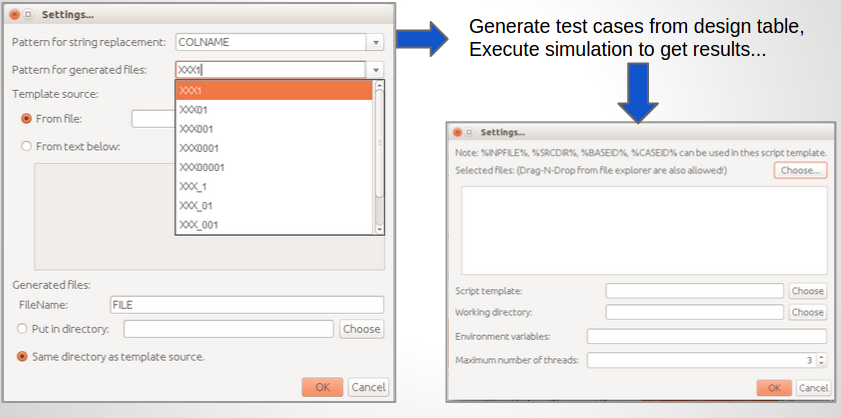

- Generate test cases: Each design may have hundreds to even millions testcases, each will activate the defined variable in different ways. Thus a modeling flow will have a step to generate these testcases from a pre-defined template and corresponding variable values for this test case.

- Execute test cases: This is the evaluation phase of the testcases created in previous step. It can be deterministic like circuit simulation or lab measurements.

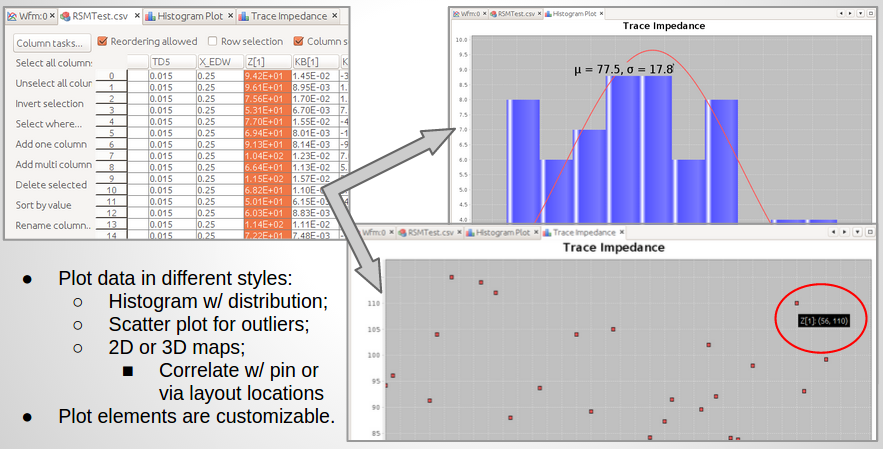

- Post processing and collect results: Once the simulation or measurement results are available, a step to extract performance report or quality metrics is usually included. Bit-error-rate, eye-openings or insertion loss etc are such example. Measurements for each testcase run must be performed for output target modeling.

- Visualize data to check/debug: One often needs to visualize the outputs to identify design or simulation issues before modeling. Plots can be presented in 2D like distribution, scattering plot or 3D such as correlation of current contributions associated with each cap’s in a package design.

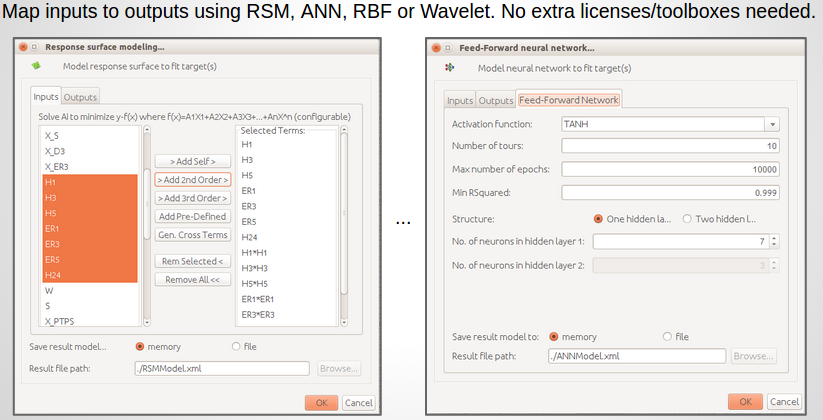

- Create model: Once inputs (different variables) and outputs (performance measurements) are available, one can create a model to map inputs to outputs. Depending on the complexity, different models may be used. Response surface model or artificial neural network are often used to construct such models.

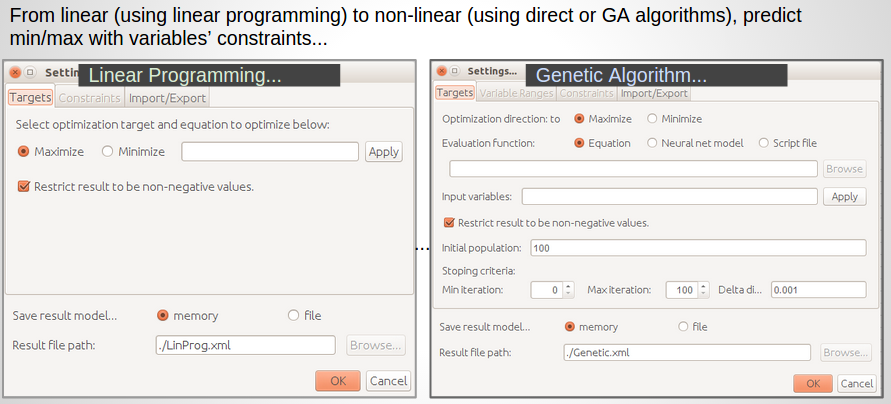

- Optimization (prediction): If the constructed model correlates existing data set well (usually means having small residue), then we can use this model to predict system’s future performance. This step usually include optimization to find best or worst case condition. Depending on the models (linear or non-linear), linear programming or genetic algorithms may be used to predict extreme conditions.

- Report out: Reports to show impacts/weights of each variables, along with created models and predicted best/worst cases should included in this report for reviewing and design reference.

MPro has functions to support each of the steps mentioned above. In addition, it’s fully integrated with tried and true algorithms/libraries so that no extra MCR or toolboxes licenses are required.

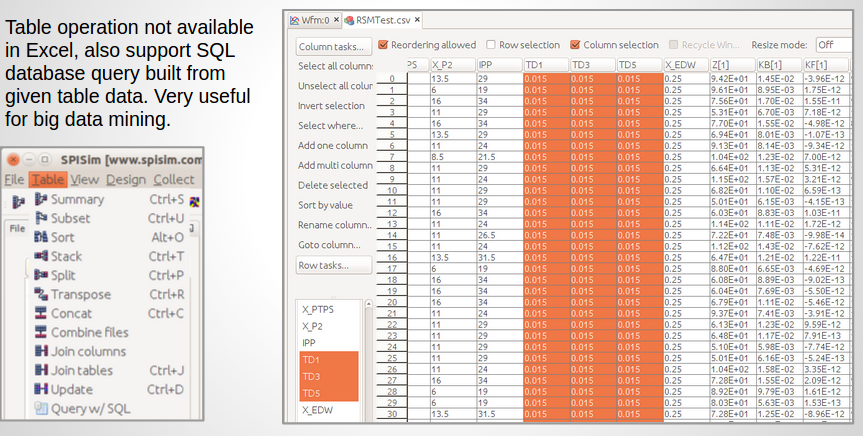

Utility functions:

MPro also has various utility functions to facilitate table operations. These are not available directly from common tool like Excel. Further more, MPro can create a database from the given table and support SQL like data query for big data mining or analysis.